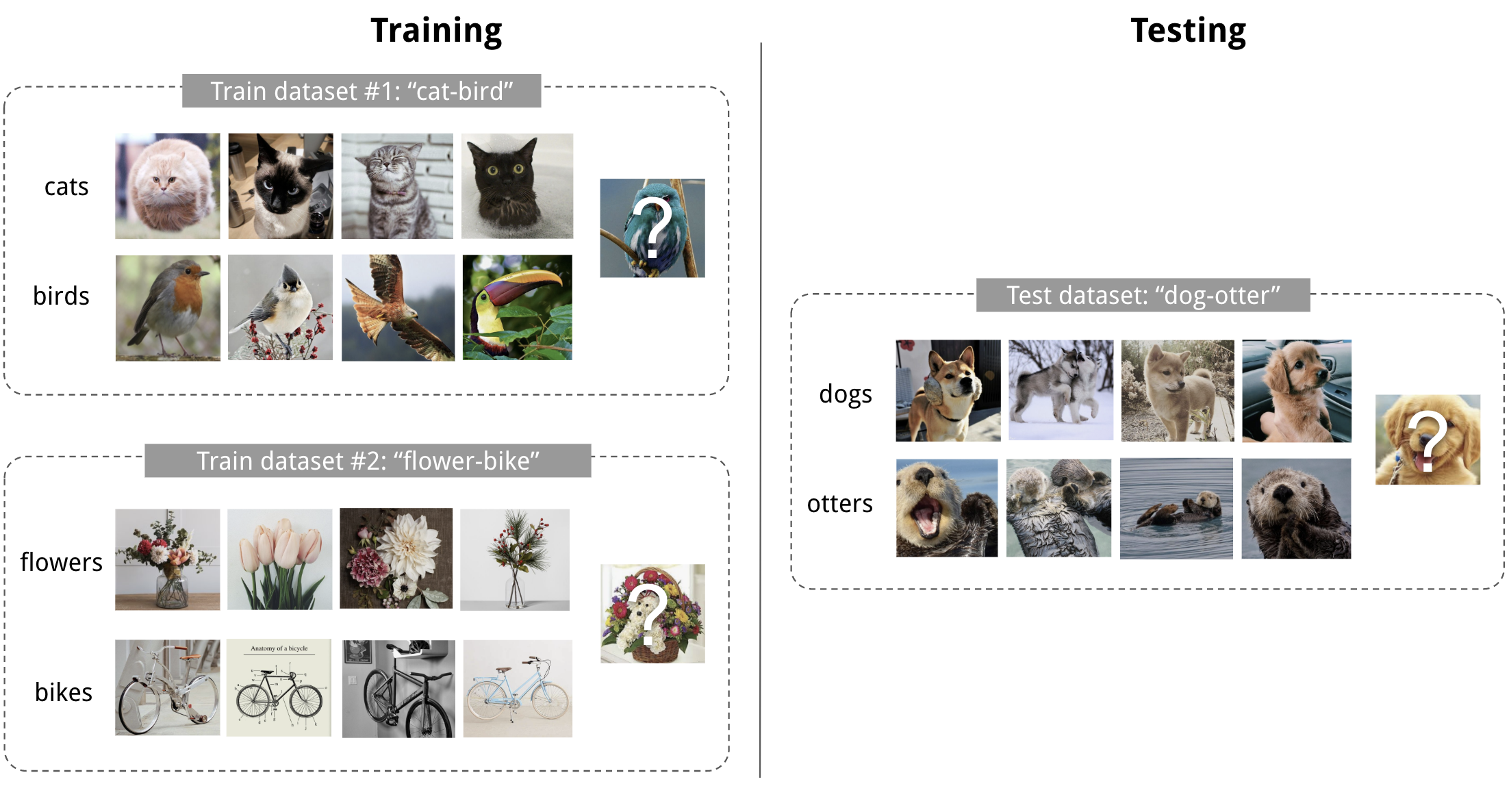

First, we need to collect meta-data that describe prior learning tasks and previously learned models. The challenge in meta-learning is to learn from prior experience in a systematic, data-driven way. Likewise, when building machine learning models for a specific task, we often build on experience with related tasks, or use our (often implicit) understanding of the behavior of machine learning techniques to help make the right choices. In short, we learn how to learn across tasks. With every skill learned, learning new skills becomes easier, requiring fewer examples and less trial-and-error. We start from skills learned earlier in related tasks, reuse approaches that worked well before, and focus on what is likely worth trying based on experience. Using more cores leads to faster training but at the expense of more memory consumption, especially for large training datasets.When we learn new skills, we rarely – if ever – start from scratch. Parallelism: Number of cores used for parallel training. If set to true, the learning algorithm will use two separate subsets of the train set to compute the optimal split and the value of a node. Honest: Whether or not the honest framework is used to build trees. Lower values increase the quality of the prediction (by splitting the tree mode), but can lead to overfitting and increased training and prediction time. Minimum samples per leaf: Minimum number of samples required in a single tree node to split this node. Use 0 for unlimited depth (i.e., keep splitting the tree until each node contains the minimum number of samples per leaf), Higher values also increase the training and prediction time. Higher values generally increase the quality of the prediction but can lead to overfitting. Maximum depth of tree: Maximum depth of each tree in the causal forest. Square root and Logarithm will select the square root or base 2 logarithm of the number of features respectivelyįixed number will select the given number of featuresįixed proportion will select the given proportion of features This parameter can be optimized.įeature sampling strategy: Adjusts the number of features to sample at each split.Īutomatic will select 30% of the features. Increasing the number of trees in a causal forest does not result in overfitting. Number of trees: Number of trees in the causal forest. The honest framework (whenever enabled) allows using two separate subsets of the train set to compute the optimal split and the value of a node. The splitting criterion is also based on both the treatment and the outcome variables The value of nodes and leaves are based on both the treatment and the outcome variables - they are an estimation of the Conditional Average Treatment Effect (CATE) Causal forest ¶Ĭausal Forests are tree-based ensemble models similar to Random Forests. You can combine any meta-learner with any available Python-based ML algorithm as a base learner. The CATE prediction is the average prediction of these last two models weighted by a predicted propensity (individual predicted probability of getting the treatment). Then, two other models (again, one for treated group and one for control group) are trained on the individual effects previously predicted.

control for treated group and treated for control group), which is combined with the observed outcome to estimate the individual treatment effect. They are used to individually predict the counterfactual outcome (i.e. Two models are trained, one on the treated group, the other on the control group (same as T-learner). The predicted CATE is the difference between the predictions from the two models. T-learner: two models are trained, one on the treated group, the other on the control group.

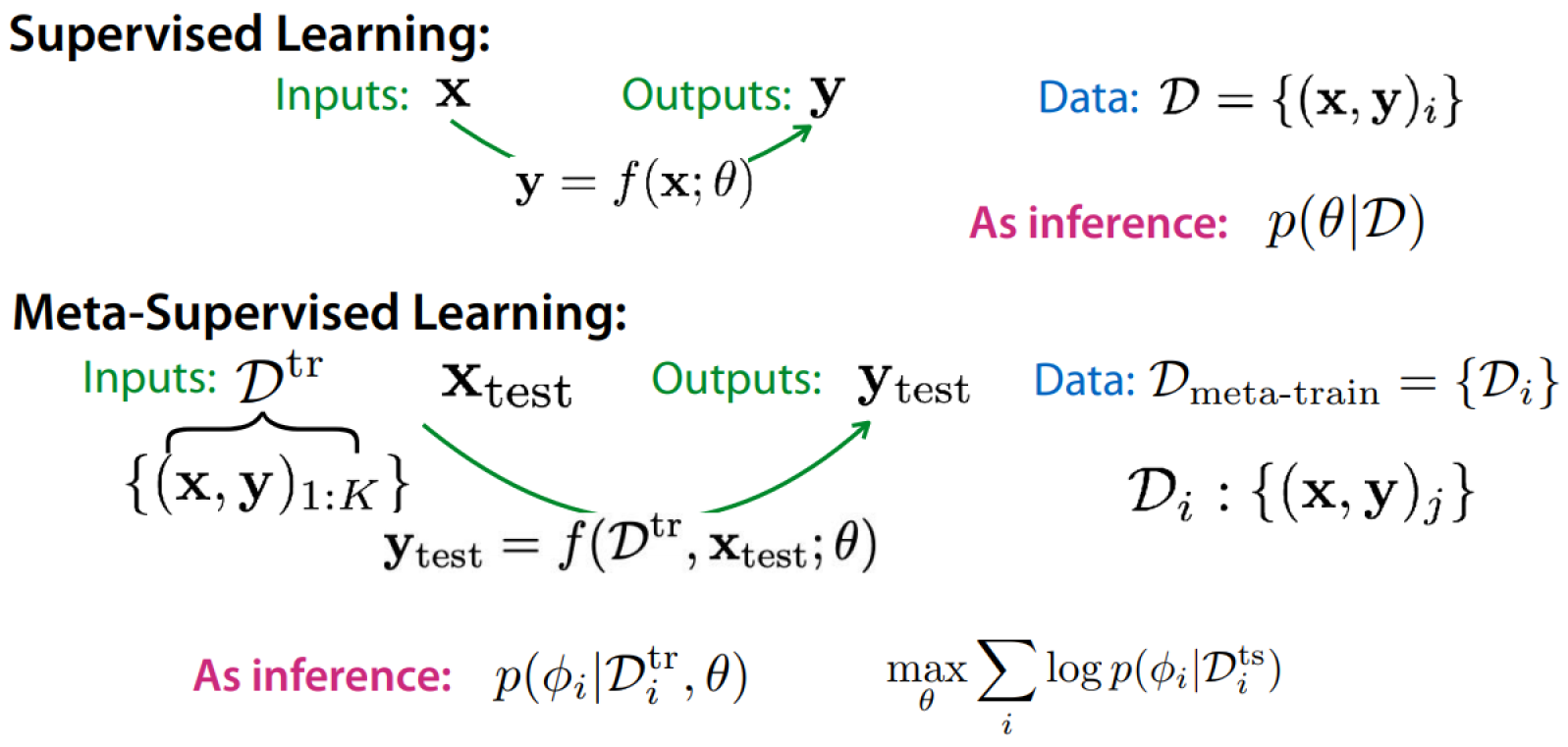

The predicted CATE is the difference between the prediction with the treatment variable set as treated and the prediction with the treatment variable set as control. S-learner: a single model is trained with the treatment variable as an input feature. The specific way they are trained and combined is referred to as the meta-learner. These ML models are called base learners. Meta-learning leverages machine learning algorithms to learn the causal effect of the treatment (Conditional Average Treatment Effect or CATE).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed